使用 Bitnami Helm 安装 Kafka

2023/1/4 4:24:01

本文主要是介绍使用 Bitnami Helm 安装 Kafka,对大家解决编程问题具有一定的参考价值,需要的程序猿们随着小编来一起学习吧!

服务器端 K3S 上部署 Kafka Server

Kafka 安装

📚️ Quote:

charts/bitnami/kafka at master · bitnami/charts (github.com)

输入如下命令添加 Helm 仓库:

> helm repo add tkemarket https://market-tke.tencentcloudcr.com/chartrepo/opensource-stable "tkemarket" has been added to your repositories > helm repo add bitnami https://charts.bitnami.com/bitnami "bitnami" has been added to your repositories

🔥 Tip:

tkemarket 镜像没有及时更新,建议使用 bitnami 仓库。

但是 bitmani 在海外,有连不上的风险。

查找 Helm Chart kafka:

> helm search repo kafka NAME CHART VERSION APP VERSION DESCRIPTION tkemarket/kafka 11.0.0 2.5.0 Apache Kafka is a distributed streaming platform. bitnami/kafka 15.3.0 3.1.0 Apache Kafka is a distributed streaming platfor... bitnami/dataplatform-bp1 9.0.8 1.0.1 OCTO Data platform Kafka-Spark-Solr Helm Chart bitnami/dataplatform-bp2 10.0.8 1.0.1 OCTO Data platform Kafka-Spark-Elasticsearch He...

使用 bitnami 的 helm 安装 kafka:

helm install kafka bitnami/kafka \ --namespace kafka --create-namespace \ --set global.storageClass=<storageClass-name> \ --set kubeVersion=<theKubeVersion> \ --set image.tag=3.1.0-debian-10-r22 \ --set replicaCount=3 \ --set service.type=ClusterIP \ --set externalAccess.enabled=true \ --set externalAccess.service.type=LoadBalancer \ --set externalAccess.service.ports.external=9094 \ --set externalAccess.autoDiscovery.enabled=true \ --set serviceAccount.create=true \ --set rbac.create=true \ --set persistence.enabled=true \ --set logPersistence.enabled=true \ --set metrics.kafka.enabled=false \ --set zookeeper.enabled=true \ --set zookeeper.persistence.enabled=true \ --wait

🔥 Tip:

参数说明如下:

--namespace kafka --create-namespace: 安装在kafkanamespace, 如果没有该 ns 就创建;global.storageClass=<storageClass-name>使用指定的 storageclasskubeVersion=<theKubeVersion>让 bitnami/kafka helm 判断是否满足版本需求,不满足就无法创建image.tag=3.1.0-debian-10-r22: 20220219 的最新镜像,使用完整的名字保证尽量减少从互联网 pull 镜像;replicaCount=3: kafka 副本数为 3service.type=ClusterIP: 创建kafkaservice, 用于 k8s 集群内部,所以 ClusterIP 就可以--set externalAccess.enabled=true --set externalAccess.service.type=LoadBalancer --set externalAccess.service.ports.external=9094 --set externalAccess.autoDiscovery.enabled=true --set serviceAccount.create=true --set rbac.create=true创建用于 k8s 集群外访问的kafka-<0|1|2>-external服务 (因为前面 kafka 副本数为 3)persistence.enabled=true: Kafka 数据持久化,容器中的目录为/bitnami/kafkalogPersistence.enabled=true: Kafka 日志持久化,容器中的目录为/opt/bitnami/kafka/logsmetrics.kafka.enabled=false不启用 kafka 的监控 (Kafka 监控收集数据是通过kafka-exporter实现的)zookeeper.enabled=true: 安装 kafka 需要先安装 zookeeperzookeeper.persistence.enabled=true: Zookeeper 日志持久化,容器中的目录为:/bitnami/zookeeper--wait: helm 命令会一直等待创建的结果

输出如下:

creating 1 resource(s)

creating 12 resource(s)

beginning wait for 12 resources with timeout of 5m0s

Service does not have load balancer ingress IP address: kafka/kafka-0-external

...

StatefulSet is not ready: kafka/kafka-zookeeper. 0 out of 1 expected pods are ready

...

StatefulSet is not ready: kafka/kafka. 0 out of 1 expected pods are ready

NAME: kafka

LAST DEPLOYED: Sat Feb 19 05:04:53 2022

NAMESPACE: kafka

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

CHART NAME: kafka

CHART VERSION: 15.3.0

APP VERSION: 3.1.0

---------------------------------------------------------------------------------------------

WARNING

By specifying "serviceType=LoadBalancer" and not configuring the authentication

you have most likely exposed the Kafka service externally without any

authentication mechanism.

For security reasons, we strongly suggest that you switch to "ClusterIP" or

"NodePort". As alternative, you can also configure the Kafka authentication.

---------------------------------------------------------------------------------------------

** Please be patient while the chart is being deployed **

Kafka can be accessed by consumers via port 9092 on the following DNS name from within your cluster:

kafka.kafka.svc.cluster.local

Each Kafka broker can be accessed by producers via port 9092 on the following DNS name(s) from within your cluster:

kafka-0.kafka-headless.kafka.svc.cluster.local:9092

To create a pod that you can use as a Kafka client run the following commands:

kubectl run kafka-client --restart='Never' --image docker.io/bitnami/kafka:3.1.0-debian-10-r22 --namespace kafka --command -- sleep infinity

kubectl exec --tty -i kafka-client --namespace kafka -- bash

PRODUCER:

kafka-console-producer.sh \

--broker-list kafka-0.kafka-headless.kafka.svc.cluster.local:9092 \

--topic test

CONSUMER:

kafka-console-consumer.sh \

--bootstrap-server kafka.kafka.svc.cluster.local:9092 \

--topic test \

--from-beginning

To connect to your Kafka server from outside the cluster, follow the instructions below:

NOTE: It may take a few minutes for the LoadBalancer IPs to be available.

Watch the status with: 'kubectl get svc --namespace kafka -l "app.kubernetes.io/name=kafka,app.kubernetes.io/instance=kafka,app.kubernetes.io/component=kafka,pod" -w'

Kafka Brokers domain: You will have a different external IP for each Kafka broker. You can get the list of external IPs using the command below:

echo "$(kubectl get svc --namespace kafka -l "app.kubernetes.io/name=kafka,app.kubernetes.io/instance=kafka,app.kubernetes.io/component=kafka,pod" -o jsonpath='{.items[*].status.loadBalancer.ingress[0].ip}' | tr ' ' '\n')"

Kafka Brokers port: 9094

Kafka 测试验证

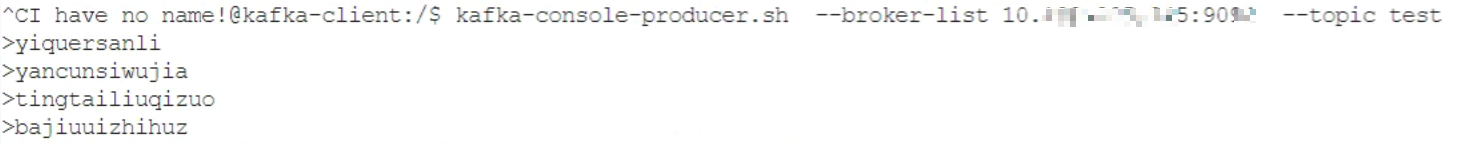

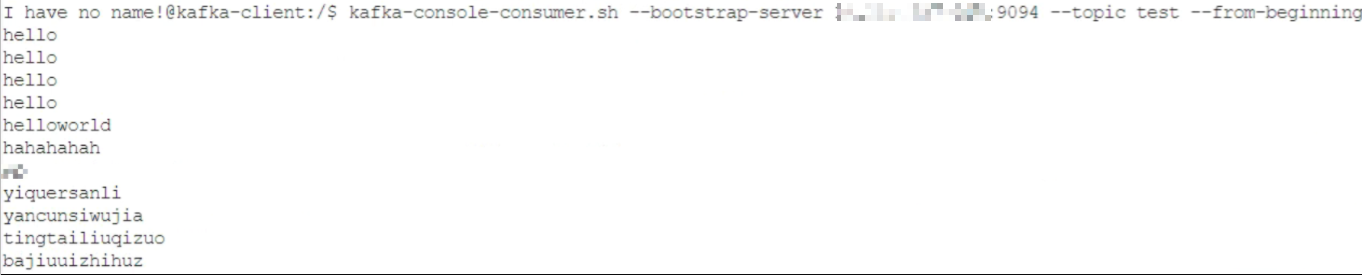

测试消息:

先用如下命令创建一个 kafka-client pod:

kubectl run kafka-client --restart='Never' --image docker.io/bitnami/kafka:3.1.0-debian-10-r22 --namespace kafka --command -- sleep infinity

然后进入到 kafka-client 中,运行如下命令测试:

kafka-console-producer.sh --broker-list kafka-0.kafka-headless.kafka.svc.cluster.local:9092 --topic test kafka-console-consumer.sh --bootstrap-server kafka-0.kafka-headless.kafka.svc.cluster.local:9092 --topic test --from-beginning kafka-console-producer.sh --broker-list 10.109.205.245:9094 --topic test kafka-console-consumer.sh --bootstrap-server 10.109.205.245:9094 --topic test --from-beginning

效果如下:

🎉至此,kafka 安装完成。

Kafka 卸载

⚡ Danger:

(按需)删除整个 kafka 的命令:

helm delete kafka --namespace kafka

总结

Kafka

-

Kafka 通过 Helm Chart bitnami 安装,安装于:K8S 集群的

kafkanamespace;- 安装模式:三节点

- Kafka 版本:3.1.0

- Kafka 实例:3 个

- Zookeeper 实例:1 个

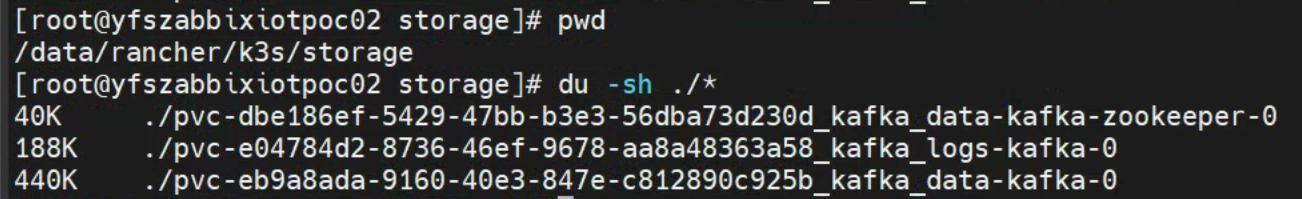

- Kafka、Zookeeper、Kafka 日志均已持久化,位于:

/data/rancher/k3s/storage - 未配置 sasl 及 tls

-

在 K8S 集群内部,可以通过该地址访问 Kafka:

kafka.kafka.svc.cluster.local:9092 -

在 K8S 集群外部,可以通过该地址访问 Kafka:

<loadbalancer-ip>:9094

Kafka 的持久化数据截图如下:

三人行, 必有我师; 知识共享, 天下为公. 本文由东风微鸣技术博客 EWhisper.cn 编写.

这篇关于使用 Bitnami Helm 安装 Kafka的文章就介绍到这儿,希望我们推荐的文章对大家有所帮助,也希望大家多多支持为之网!

- 2024-12-21MQ-2烟雾传感器详解

- 2024-12-09Kafka消息丢失资料:新手入门指南

- 2024-12-07Kafka消息队列入门:轻松掌握Kafka消息队列

- 2024-12-07Kafka消息队列入门:轻松掌握消息队列基础知识

- 2024-12-07Kafka重复消费入门:轻松掌握Kafka消费的注意事项与实践

- 2024-12-07Kafka重复消费入门教程

- 2024-12-07RabbitMQ入门详解:新手必看的简单教程

- 2024-12-07RabbitMQ入门:新手必读教程

- 2024-12-06Kafka解耦学习入门教程

- 2024-12-06Kafka入门教程:快速上手指南